Search engine optimisation is often associated with keywords, content, and backlinks. However, behind every well-ranking website is a strong technical foundation that allows search engines to access and understand its pages.

Technical SEO focuses on improving the infrastructure of a website so that search engines can crawl, index, and interpret its content more effectively.

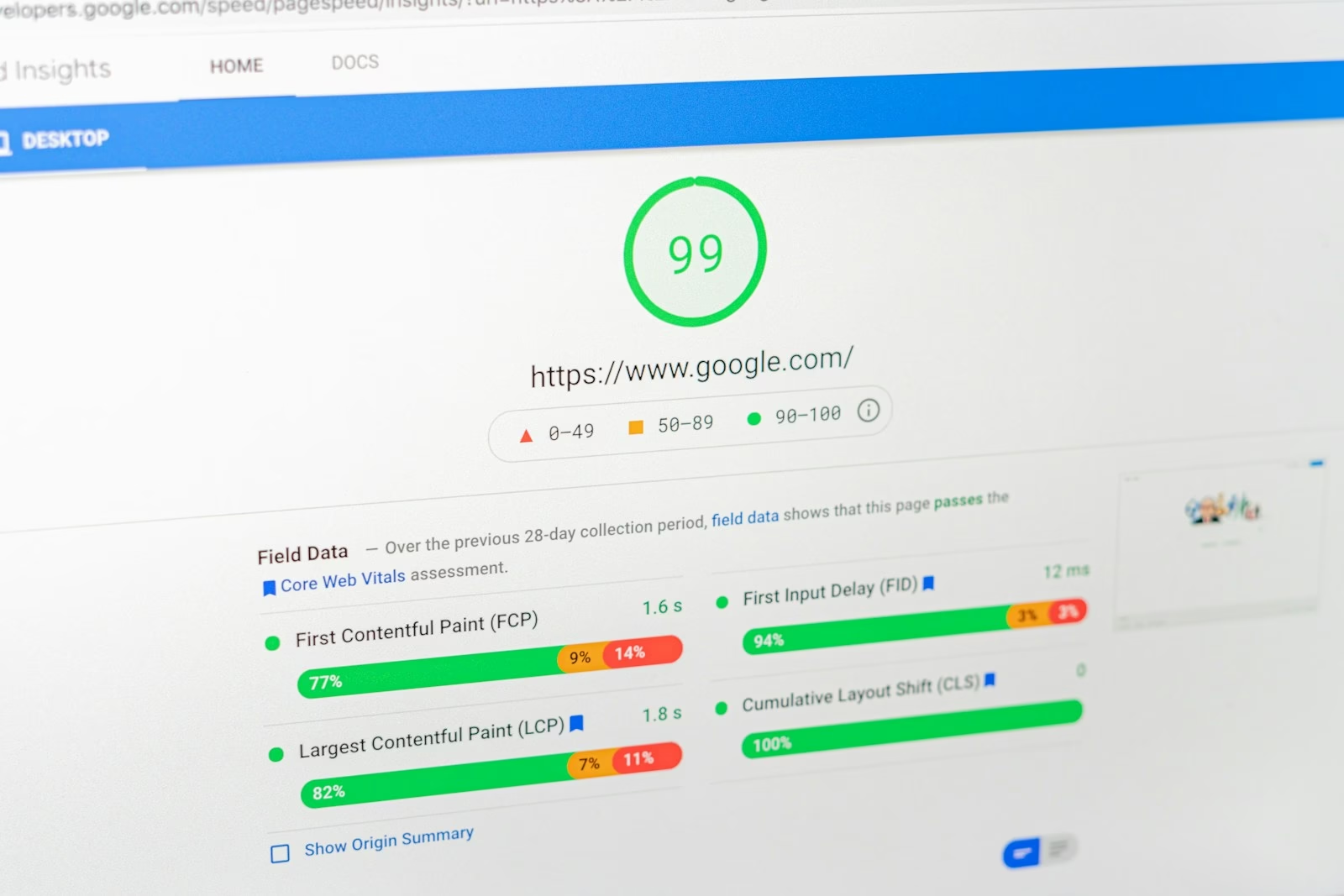

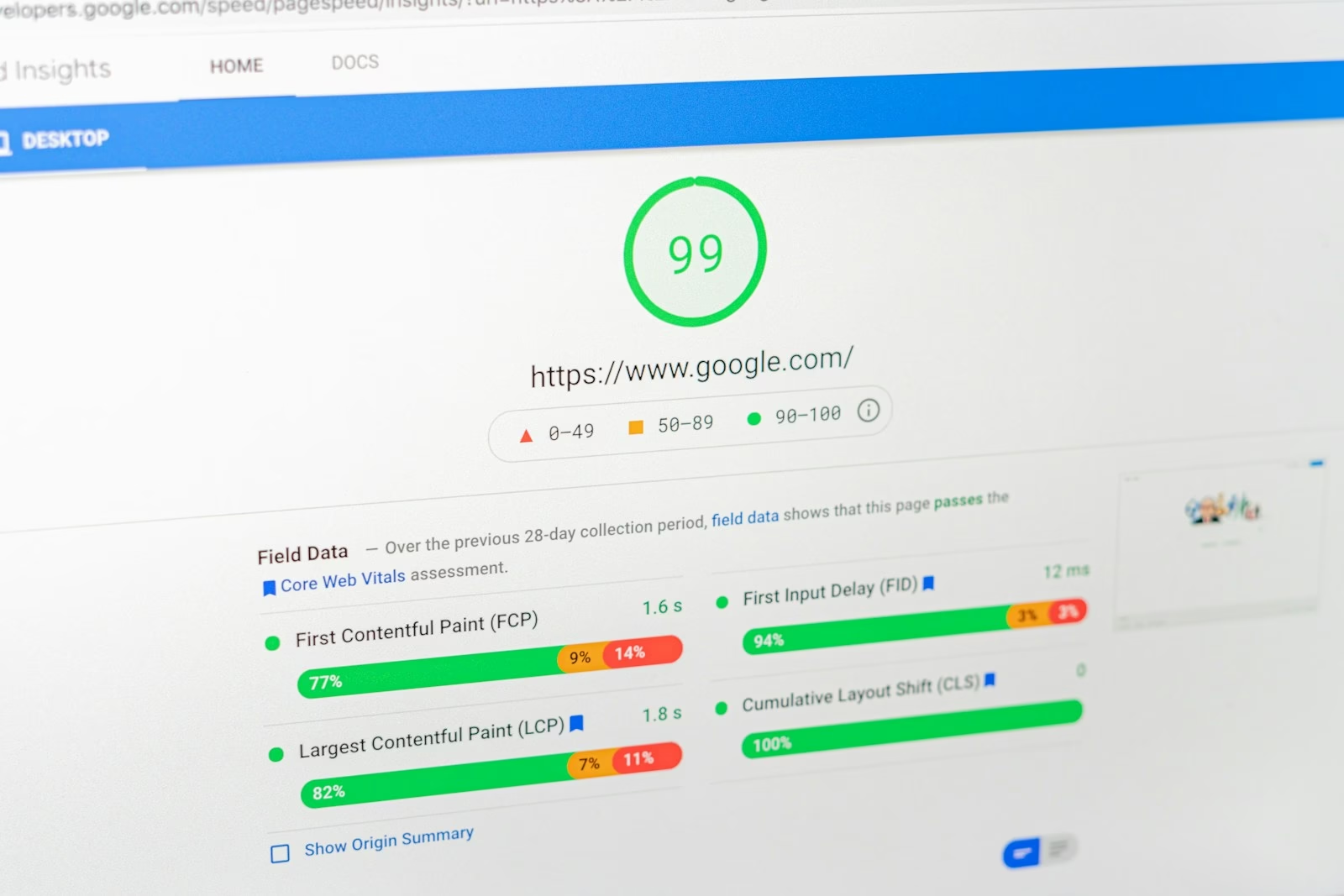

Whether you are a beginner or building your first website, understanding the technical side of SEO is essential. Without a solid technical setup, even high-quality content may struggle to appear in search results. A well-optimised website ensures that search engines can easily discover pages and deliver them to users when they search online. Many of these improvements also contribute to better performance metrics such as Core Web Vitals.

In this guide, we’ll explore what technical SEO is, why it matters, and the key elements that help websites perform better in search engines.

What Is Technical SEO?

Technical SEO refers to the process of optimising the technical aspects of a website to improve how search engines crawl and index its content.

While traditional SEO focuses on content, keywords, and backlinks, technical SEO focuses on the underlying structure of a website.

This includes areas such as:

- site structure

- page speed

- mobile compatibility

- indexing and crawling

- structured data

These elements help search engines understand how pages are organised and how they relate to each other.

Why Technical SEO Matters

Search engines rely on automated bots to discover and evaluate websites. If those bots cannot properly access or interpret a site, its pages may not appear in search results.

Strong technical SEO helps ensure that:

- search engines can crawl your website efficiently

- pages load quickly and perform well

- content is structured clearly

- users receive a smooth browsing experience

Technical optimisation also supports important performance metrics such as Core Web Vitals, which measure loading speed, responsiveness, and visual stability.

How Search Engines Crawl and Index Websites

Before a page appears in search results, it must go through two key processes: crawling and indexing.

Crawling

Search engines use automated bots to discover webpages across the internet. These bots follow links from page to page, gathering information about website content.

Indexing

Once a page is crawled, the search engine analyses its content and stores it in a massive database known as the search index.

Only indexed pages can appear in search results.

Key Elements of Technical SEO

Several technical factors influence how well a website performs in search engines.

Understanding these core elements can help ensure that search engines can access and interpret your site effectively.

Website Structure

A clear site structure helps both users and search engines navigate a website more easily. Strong structure is also an important part of modern web design systems used to keep digital products consistent and scalable. Pages should be organised into clear categories, with internal links connecting related content.

Good site architecture usually includes:

- logical navigation menus

- internal linking between related pages

- organised categories and content sections

A well-structured site helps search engines discover content faster and understand the relationships between pages.

Page Speed and Performance

Page speed is an important ranking factor and a key part of user experience. Slow websites often lead to higher bounce rates and reduced engagement.

Common ways to improve performance include:

- optimising images

- reducing unnecessary JavaScript

- using caching

- improving server response times

Performance improvements also support metrics such as Core Web Vitals, which measure real-world user experience.

Mobile Friendliness

Most web searches now take place on mobile devices. Because of this, search engines prioritise websites that perform well on smaller screens.

Responsive design ensures that websites adapt to different screen sizes without affecting usability.

Mobile-friendly websites typically provide a better experience for users and are more likely to rank well in search results.

HTTPS and Site Security

HTTPS is a confirmed Google ranking factor. Websites that use HTTPS encrypt data exchanged between the browser and the server, protecting users from security risks.

If your website still uses HTTP, migrating to HTTPS is one of the most straightforward technical improvements you can make. Most hosting providers offer free SSL certificates through services such as Let’s Encrypt.

Search engines are more likely to trust and rank secure websites, and users are more likely to stay on a site that shows a padlock in the browser address bar.

XML Sitemaps

An XML sitemap helps search engines understand the structure of a website and discover its pages.

Sitemaps list important URLs and provide additional information such as:

- page updates

- priority levels

- content hierarchy

Submitting a sitemap to search engines can help ensure that important pages are crawled and indexed.

Robots.txt

A robots.txt file is a simple text file placed at the root of your website that tells search engine bots which pages or sections they are allowed to crawl.

For example, you may want to prevent bots from crawling admin pages, login areas, or duplicate content that should not appear in search results.

While robots.txt does not directly improve rankings, it helps search engines focus their crawl budget on the most important parts of your website. Incorrect configuration can accidentally block important pages, so it is worth reviewing regularly.

Canonical Tags

Canonical tags are a commonly overlooked but important technical SEO element. They tell search engines which version of a page is the primary one when duplicate or similar content exists across multiple URLs.

For example, if a product page is accessible through several different URLs due to filters or tracking parameters, a canonical tag ensures that search engines attribute all ranking signals to the correct version.

Without canonical tags, search engines may split ranking signals across duplicate pages, reducing the overall visibility of your content. Adding a canonical tag is a straightforward way to avoid this issue.

Structured Data

Structured data helps search engines understand the meaning of website content and is becoming increasingly important as search evolves with AI-driven search results. Using schema markup allows websites to provide additional context about their pages.

This can enable enhanced search results such as:

- star ratings

- FAQ results

- product information

- event listings

These enhanced results can increase visibility and improve click-through rates.

Common Technical SEO Mistakes

Even well-designed websites can experience technical issues that affect search performance. Understanding common mistakes can help prevent problems that may limit a website’s visibility.

Slow Page Loading

Large images, excessive scripts, or inefficient hosting can slow down websites significantly. Slow pages often lead to poor user experience and lower search rankings.

Broken Links

Broken internal links can prevent search engines from crawling important pages and may frustrate users trying to navigate the site. Regular site audits can help identify and fix these issues.

Poor Site Structure

If content is buried too deeply within a website, search engines may struggle to find it. Clear navigation and internal linking help ensure that important pages remain accessible.

Missing or Incorrect Robots.txt

A misconfigured robots.txt file can accidentally block search engines from crawling important pages. Always check your robots.txt after major website changes to ensure nothing critical is being blocked.

No Canonical Tags on Duplicate Content

Websites with multiple URLs for the same or similar content risk splitting their ranking signals. Canonical tags resolve this by pointing search engines to the preferred version of a page.

Practical Example

Imagine launching a blog with great content but poor technical structure.

If pages load slowly, navigation is confusing, and search engines cannot crawl the site effectively, that content may never reach its audience.

By improving site structure, optimising page speed, and ensuring mobile compatibility, developers create a strong technical foundation that allows content to perform well in search results.

Frequently Asked Questions

What is the difference between technical SEO and regular SEO?

Technical SEO focuses on website infrastructure and performance, while traditional SEO focuses on content, keywords, and backlinks.

Do beginners need to worry about technical SEO?

Yes. Even basic technical improvements such as faster loading speeds, clear navigation, and mobile optimisation can significantly improve search visibility.

How often should technical SEO be reviewed?

Technical SEO should be reviewed regularly, especially after major website updates, design changes, or migrations.

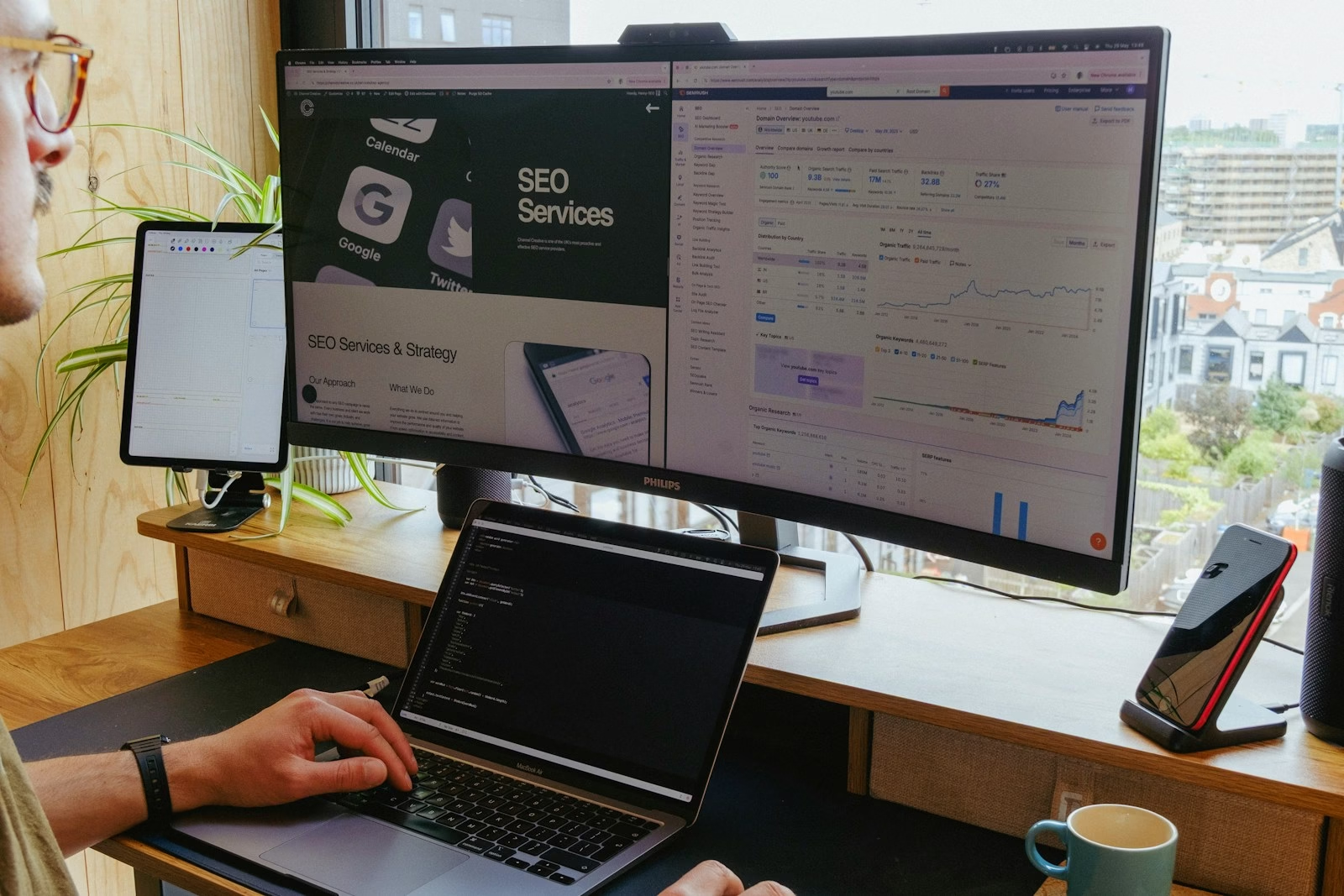

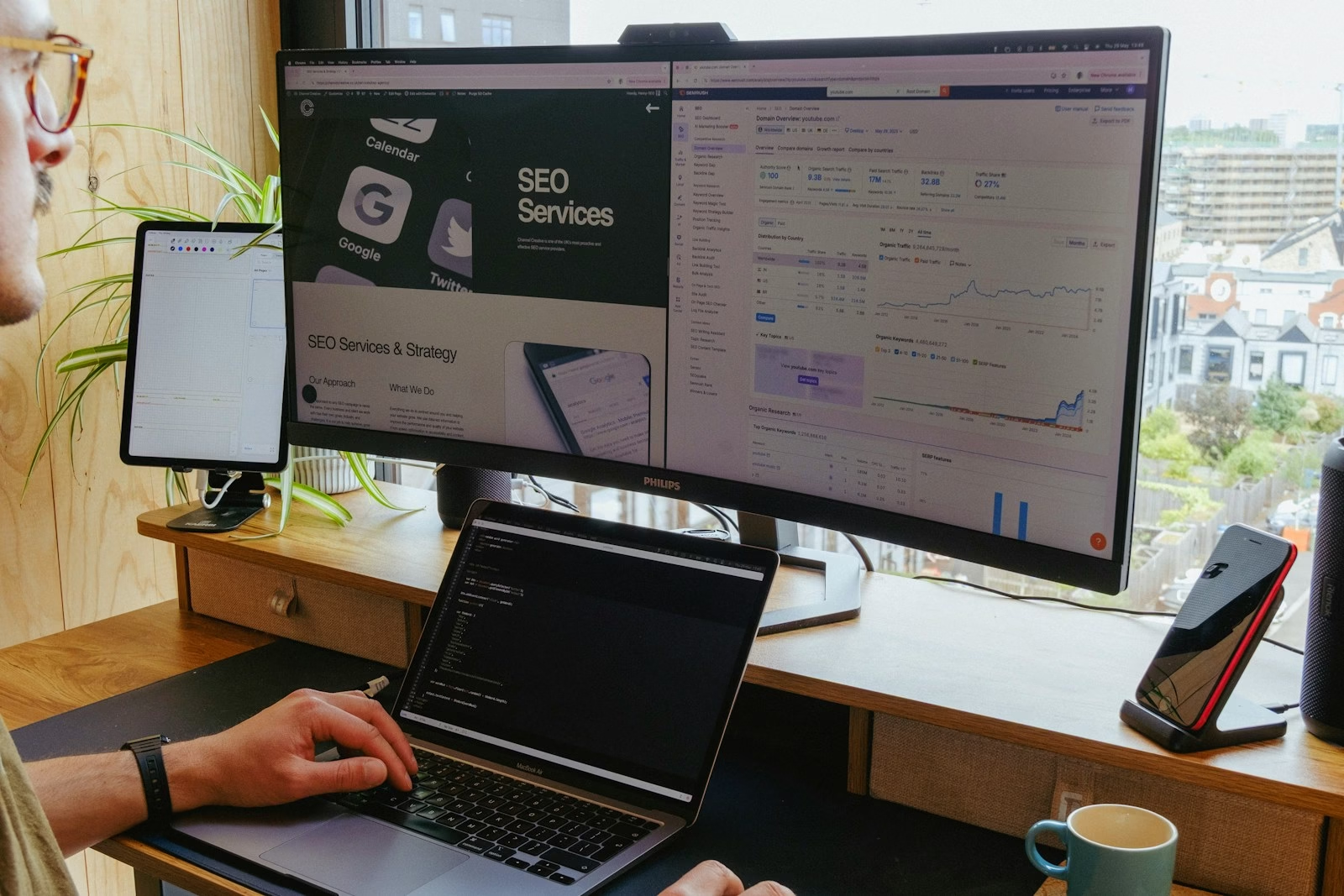

What tools can I use for technical SEO?

Several free and paid tools can help you audit and improve your technical SEO. Google Search Console is the most essential starting point, allowing you to monitor crawl errors, indexing issues, and Core Web Vitals. Other popular tools include Screaming Frog for site audits, GTmetrix or PageSpeed Insights for performance testing, and Ahrefs or SEMrush for broader SEO analysis.

Does HTTPS affect my search rankings?

Yes. HTTPS is a confirmed ranking signal used by Google. Websites using HTTPS are considered more secure and trustworthy, which can positively influence search visibility. Migrating from HTTP to HTTPS is one of the simplest technical improvements you can make.

Key Takeaways

- Technical SEO helps search engines crawl and index websites efficiently

- A strong technical foundation improves website performance and usability

- Page speed, site structure, and mobile compatibility are essential elements

- Technical improvements support better search visibility and user experience

Final Thoughts

Technical SEO provides the foundation that allows websites to perform effectively in search engines. While content and keywords remain important, they rely on a strong technical structure to reach their full potential.

By focusing on site performance, crawlability, and structured organisation, developers and website owners can ensure their content is discoverable, accessible, and ready to compete in search results.